Does A/B Testing Hurts Your Search Engine Optimization (SEO)?

When it comes to website optimization, two crucial factors often seem to be at odds: improving conversion rates through A/B testing and maintaining strong SEO performance. Many website owners worry that running A/B tests might harm their search rankings. Let's dive into whether these concerns are justified and how to balance both effectively.

Understanding Website Testing Types

Before we address the main question, it's important to distinguish between two primary types of testing:

A/B Testing

Creates multiple versions of the same webpage, showing different variations to different users while maintaining the same URL. For example, testing different headlines or button colors to improve conversion rates. Learn more about A/B testing fundamentals.

SEO Split Testing

Involves testing changes across groups of similar pages with different URLs. This type of testing is specifically designed for SEO purposes and typically involves testing elements like title tags or meta descriptions.

Does A/B Testing Hurt SEO?

The short answer is no - A/B testing doesn't harm SEO when implemented correctly. In fact, Google actively encourages website testing through their own tool, Google Optimize. However, proper implementation is crucial to maintain your search rankings while optimizing for conversions.

Best Practices for SEO-Safe A/B Testing

Technical Implementation

- Use rel="canonical" tags to prevent duplicate content issues

- Implement 302 (temporary) redirects rather than 301s

- Ensure all test variations are accessible to search engines

- Don't cloak content from search engines - learn more about cloaking guidelines

Test Duration Management

- Keep tests time-limited (typically 2-4 weeks maximum)

- Implement winners promptly once statistical significance is reached

- Document and track all testing changes

Content Considerations

- Maintain consistent main content across variations

- Focus on layout and design changes rather than core content

- Avoid testing drastically different content versions simultaneously

Speed Impact and Performance

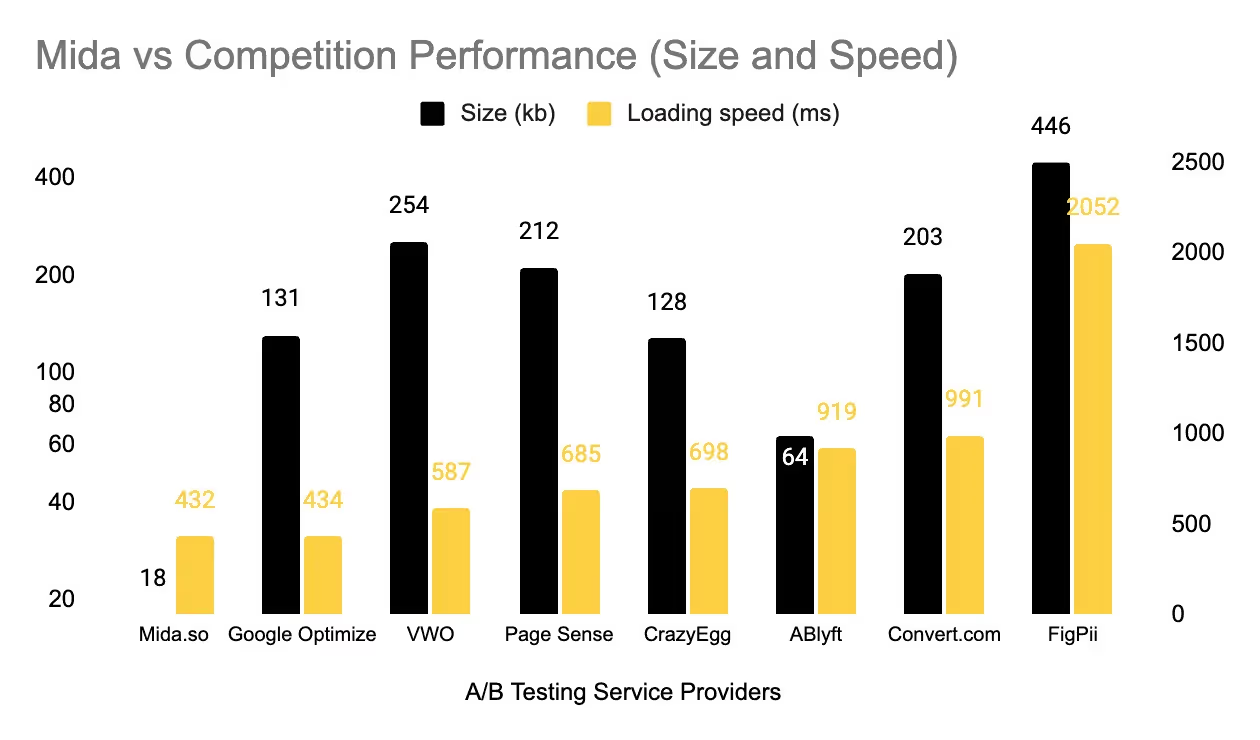

One often overlooked aspect of A/B testing is its impact on site speed, which directly affects both SEO and conversions. Most modern A/B testing platforms use asynchronous loading to minimize speed impact. However, it's crucial to monitor your site's performance metrics during testing periods.

Here is a speed test performance of some of the A/B testing tools in the industry:

Balancing CRO and SEO

Sometimes, what's best for conversion rate optimization (CRO) might seem to conflict with SEO best practices. For instance:

- Short vs. long-form content

- Above-the-fold call-to-actions vs. content-rich headers

- Image-heavy designs vs. fast-loading pages

The key is finding the sweet spot where both disciplines complement each other:

- Base experiments on data-driven insights

- Test incremental changes rather than complete overhauls

- Monitor both SEO metrics and conversion rates during tests

- Document successful tests for future optimization

Common Concerns Addressed

Cloaking

A/B testing is not considered cloaking as long as you're not intentionally showing different content to search engines versus users. Google understands and supports legitimate testing practices. Read more about Google's stance on cloaking.

Duplicate Content

While A/B testing creates multiple versions of the same page, proper implementation of canonical tags prevents any duplicate content issues.

Traffic Fluctuations

Minor ranking fluctuations during testing are normal and typically temporary. Once you implement the winning version, rankings usually stabilize quickly. Learn more about search traffic analysis.

Conclusion

A/B testing and SEO can absolutely work together harmoniously when implemented correctly. The key is following best practices, monitoring results, and using the right tools. With platforms like Mida handling the technical complexities, you can focus on what matters most - improving your website's performance and user experience.

Ready to start testing without compromising your SEO? Try Mida today and experience the perfect balance of conversion optimization and search engine performance.

.svg)